The Hidden War for AI Intelligence: Inside the 13-Million Distillation Attack on Anthropic's Claude

The frontier of artificial intelligence isn’t just about who can build the smartest model—it’s increasingly about who can protect it from being systematically pillaged.

Key Takeaways

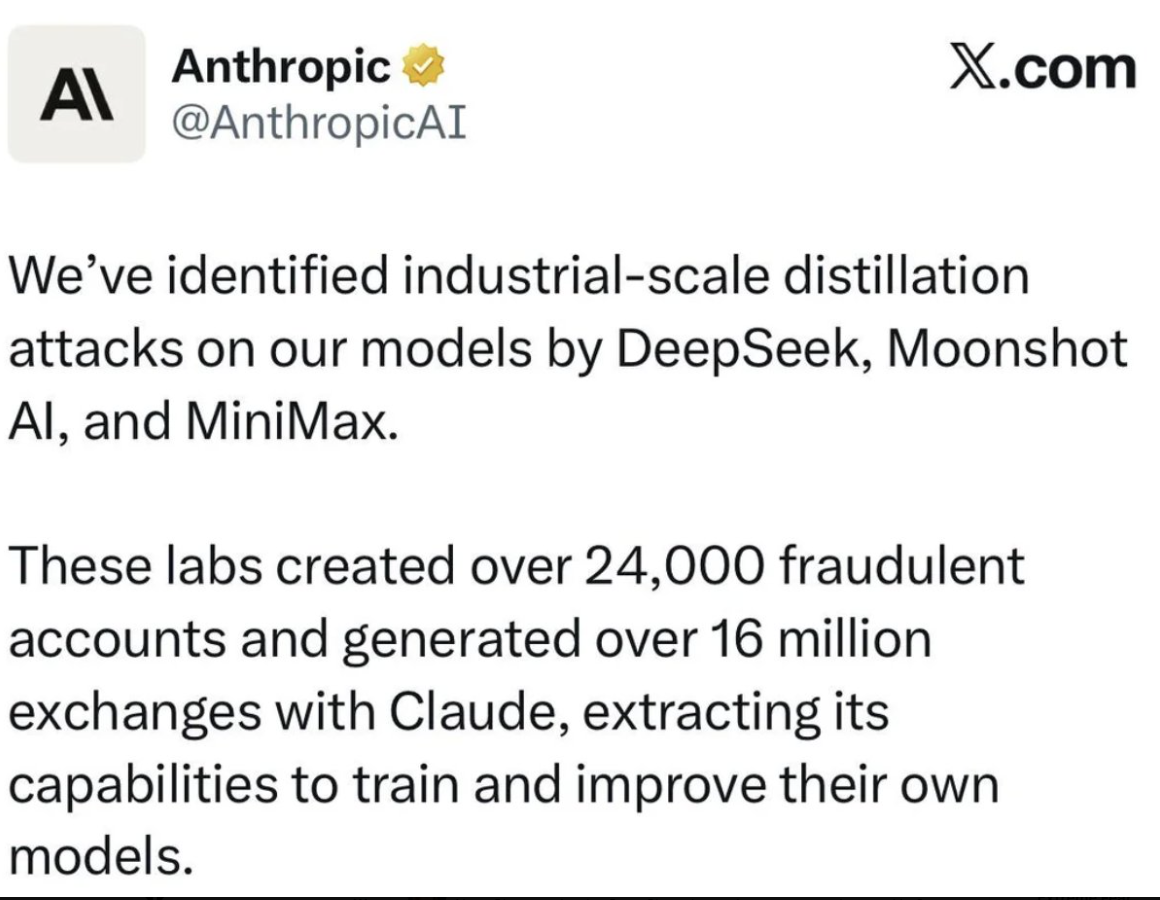

- Industrial-Scale Data Heist: Three leading Chinese AI labs (DeepSeek, Moonshot, and MiniMax) executed over 16 million fraudulent exchanges to extract Claude’s capabilities via a process known as “distillation.”

- Hydra Cluster Proxies: Attackers circumvented geolocation bans using sophisticated networks of 24,000 fraudulent accounts.

- National Security Deficits: The models synthesized from these attacks typically strip away Anthropic’s core safety guardrails, posing severe cyber and bioweapon risks.

- A Coordinated Defense: The rapid weaponization of LLMs demands a unified approach to API governance and model protection across the enterprise AI ecosystem.

The Great Distillation Attack of 2026

Anthropic recently uncovered one of the most sophisticated cyber-campaigns in modern AI history. In a massive breach of terms of service, three prominent Chinese artificial intelligence laboratories bypassed regional restrictions using “hydra cluster” proxy networks. Their target? The advanced reasoning capabilities of Anthropic’s Claude.

MiniMax alone was responsible for more than 13 million of these illicit exchanges.

This wasn’t a standard hacking attempt where source code was stolen. Instead, it was an “industrial-scale distillation attack.” In model distillation, a weaker AI acts as a student, rapidly querying a “teacher” model (like Claude) to absorb its synthetic data, advanced reasoning pathways, and high-quality outputs. They learn from the master, replicating high-end capabilities at a fraction of the original R&D cost.

The Risk: Advanced Capability Without the Guardrails

While distillation is a standard technique for compressing internal models, using it across competitor boundaries—especially across geopolitical lines—introduces unique threats.

Models built via illicit distillation rarely inherit the “teacher” model’s constitutional safety protocols. When an authoritarian state or unregulated enterprise leverages these unaligned models, they gain access to frontier AI capabilities without the safeguards designed to prevent malicious cyber operations or disinformation campaigns. As we outlined in our coverage of the Sovereign AI arms race, AI is no longer a neutral utility; it is a fundamental pillar of national defense strategy.

Furthermore, this incident underscores the vulnerability of modern API endpoints. It serves as a stark reminder that as models become more intelligent, the attack vectors aiming to siphon that intelligence grow more complex. According to Anthropic’s security reports and major coverage from sources like Cyberpress, the industry must coordinate to secure these frontier assets.

What This Means for Enterprise AI

For business leaders and enterprise architects, the implications stretch far beyond geopolitical espionage. The distillation attack on Anthropic reveals deep vulnerabilities in how the world currently meters and monitors AI access.

- Re-evaluating API Security: Basic rate limiting is no longer sufficient. Companies deploying proprietary AI models internally must adopt zero-trust AI agent governance frameworks that can detect behavioral anomalies, such as systematic proxy routing or “hydra” account clusters.

- The Synthetic Data Value Debate: If competitors can copy the behavior of a multi-billion dollar foundational model for practically nothing, the defensive moat of enterprise AI shifts. The real competitive advantage lies not just in the foundational model, but in an organization’s proprietary, domain-specific data and how carefully it is partitioned.

- Open vs. Closed Ecosystems: The argument between open-source autonomy and closed-model security will intensify. Securing knowledge graphs and corporate data from “silent distillation” by third-party vendor platforms must become a chief concern for any modern executive.

Final Thoughts

The Anthropic distillation attack is a wake-up call. We’re moving from an era of purely building AI to one of aggressively defending it. As global competitors look to fast-track their AI roadmaps via synthetic extraction, the industry must pivot toward zero-trust AI infrastructure, fortified application layers, and rigorous oversight.

To stay ahead, organizations must stop viewing AI solely through the lens of productivity and start treating it as highly sensitive intellectual property. The next major cyber threat isn’t just someone stealing your data—it’s someone stealing your AI’s brain. For more insights on securing organizational intelligence, explore our comprehensive breakdown on Unlocking Claude’s Mind.